-

Истечение карт

Нет смысла говорить о том, что истечение паролей и ключей — плохая идея. Об этом уже много раз писали.

Но интересно, почему такая же история с пластиковыми картами? По умолчанию они действительны три года. Вопрос: почему только три года? Что мешает карте работать пять, семь, пятнадцать лет? У нее что, чип протухает? Очевидно, препятствий этому нет, нужно лишь поменять дату в базе данных.

Понравилось ли бы служащему банка, если бы каждые три года нужно было менять замок на входной двери? Или гонять машину к дилеру, чтобы тот перепрошил электронику, иначе в машину не попадешь. Или ноут спустя три года не включается, и ты едешь в офис Эпла, чтобы его продлили. А с картами это считается нормальным.

Самое главное, что правило в три года игнорируется самим банком, когда ему хочется. Например, премиум-карты делают на семь лет, потому что они металлические и выпускать их каждые три года дорого. Или когда против России ввели санкции, у банков временно закончился пластик для выпуска карт. И банки что? — просто продлили сроки карт. Массово пришли смс-ки: ваша карта *1234 теперь работает до 2030 года.

Сервисы онлайн-платежей тоже подтянулись: они перестали проверять дату карт, потому что люди на автомате вводят ту, что на карте, а не ту, что в смс.

Спрашивается, а что, так можно было? Как видим, да. Истечение по времени — вещь искусственная, и в случае нужды ее легко подвинуть. Никто не умер, банковская система не рухнула. Беда в том, что двигать такие вещи может лишь тот, кто ввел их в оборот, то есть не мы с вами.

-

Java

У меня появился пет-проект, и самое главное — он не на Кложе, а на Джаве. Пишу уже две недели, и вот какие ощущения.

Современная Джава неожиданно хороша. Если взять последнюю версию 21, не цепляясь на бородатое легаси, то писать на ней довольно приятно. Скажем, везде вместо классов использовать рекорды — это неизменяемые классы, которые экономят код и несут клевые вещи из коробки.

Или использовать паттерн-матчинг по классам, например:

switch (msg) { case BindComplete ignored: break; case AuthenticationOk ignored: break; case AuthenticationCleartextPassword ignored: handleAuthenticationCleartextPassword(); break; case ParameterStatus x: handleParameterStatus(x); break; default: throw new SomeException("no branch");Когда-то давно это делалось паттерном Visitor (Посетитель). От одного упоминания на зубах скрипит песок. А теперь это несколько строчек. Современной Джаве нужно все меньше паттернов, потому что они нативо поддерживаются языком. Это происходит медленно, в год по чайной ложке, но в данном случае медлительность значит неотвратимость.

В 21 Джаве завезли виртуальные треды. С ними можно писать блокирующий код, а JDK сама определит, когда тред можно остановить и возобновить. Конечно, нужно знать детали процесса. Недавно я погонял виртуальные треды из Кложи, и прям очень приятно.

Хочется отметить Джавный тулинг. Идея Community Edition очень хороша. Все подхватывает, безошибочно подсказывает варианты в выпадашках. В Кложе такого нет и никогда не будет.

Да, в Джаве нет репла, но вот смотрите: я написал класс Main с запускалкой кода. Жму зеленую стрелку — все выполнилось. Если что-то упало, я ставлю дебаг на строку и жму кнопку с жуком. Исполнение прерывается на отметке, вижу локальное состояние, правлю код, запускаю. Прекрасно.

В Кложе, наоборот, нет встроенного отладчика вообще. Есть отладчик в emacs-cider, но он странный и работает через раз. Для себя я написал отладчик bogus, и он спасал меня раз двести, не меньше. Но моя поделка ничего не решает — ей пользуются три анонимуса, а остальные дебажат принтами или через tap.

Конечно, начинать проект на Джаве 21 — это утопия. Куда бы вы не пошли, вас будет ждать легаси, которому, дай бог, обновиться бы до Джавы 17. Там будут паттерны, частично инициированные POJO и прочие прелести. Но хобби на свежей Джаве — почему нет?

Джава развивается так быстро, что не успеваешь следить. В нее вливают горы денег, а работают над ней одни из умнейших людей мира. Это чувствуется. Например, в случае с виртуальными тредами пришлось пройти по всей SDK и поправить все блокирующие вызовы. Колоссальная работа! Все, чтобы программист поменял

FixedPoolExecutorнаVirtualThreadExecutor, и вжух — полетело.Не все принимают эту данность. Я знаю человека, который уверен, что Джава — это собственность Оракла (хорошо хоть не Sun), за нее нужно платить, она тормозная и ест много памяти. При этом на Джаве он писал 15 лет назад. Уютный мирок заблуждений приятней, чем реальность.

Сказанное не значит, что я записался в джависты. Просто у Джавы можно многому поучиться в плане развития. Взять на заметку.

-

Обложки

Я не могу смотреть на обложки, где автор кривляется. Например, делает грустное или злое лицо. Кривит рот. Или в заголовке указано “Choosing X vs Y”, и автор подпирает подбородок и смотрит вверх — типа задумался.

Помните Крошку Енота? Не строй рожи, а улыбнись! И оно к тебе вернется.

Не буду приводить примеры, чтобы не обидеть кого-нибудь. Но блин, какая же это тупизна. Хотя и здесь можно найти пользу: если автор кривляется, смотреть видео не нужно.

-

О заголовках

Небольшая заметка о том, как писать заголовки. Главное правило — заголовок не должен делать предположений о читателе или диктовать ему шаги. Читатель — абсолютно нейтральная сторона. Указывать ему — все равно что ломать четвертую стену, то есть фу.

Примеры плохих заголовков:

-

10 фактов о JavaScript, которые должен знать каждый разработчик. Ничего я тебе не должен, отвали.

-

20 фактов о Python, которые вы не знали. Я на нем 8 лет писал, все я знаю.

-

Почему ваш код — отстой. Это у тебя отстой, не переноси с больной головы не здоровую.

-

Посмотрите это видео прямо сейчас или добавьте в закладки. Спасибо, сам разберусь, что и когда смотреть и где хранить.

Удивляет, что вроде бы нормальные ребята нет-нет да озаглавят материал в таком духе. Один кложурист написал статью “Why your REPL experience sucks”. Я посмотрел код, а он там жестко накосячил. Интересно выходит: косячит он, а experience sucks мой.

Или видел заголовок Медузы: вышло какое-то видео, посмотрите сейчас или добавьте в закладки. Может, еще выслать письменный отчет о просмотре? И переслать ссылку десяти знакомым?

Нельзя тыкать читателю, что он знает и что нет, что и когда смотреть. Читатель сам прекрасно разберется.

-

-

DVD-квесты

Накатило воспоминание, и хочу им поделиться с вами.

В моем детстве не было интернета, и контент доставали на DVD. Это были фильмы, софт, музыка любимых групп. И надо сказать, кроме содержимого диска я всегда интересовался его оформлением.

На DVD мог быть не только фильм, но и интерактивное меню. Например, при включении диска показывалась менюшка, где можно было выбрать просмотр сначала, конкретный эпизод или фанатский контент: короткометражки, бекстейдж и прочее.

Технически менюшка могла быть как картинкой, так и видео. Классно смотрелись менюхи, где фоном шло видео, а кнопки тоже были анимированные, например, короткие сцены к эпизодам. Кнопки работали как горячие области: на них можно было кликать. По клику человек улетал на другую менюху или видео.

Одно время я работал на телеканале Альтес, и там мне это очень пригодилось. Мы частенько записывали клипы местных артистов на DVD, и до меня оформления дисков как такового не было. В программе для записи выбирали менюху по умолчанию, и все. А я заморочился и сделал менюхи в стиле каждого артиста. Это был фурор, и подобная вещь стала стандартом: нам, пожалуйста, с менюшкой, как у тех ребят.

Так вот, у меня было много дивидишек, красивых и не очень, но один я никогда не забуду. В то время я увлекался группой Korn — я и сейчас их люблю, но меньше — и попался мне их диск. Я думал, что на нем обычный сборник клипов, но то, что было внутри, превзошло все ожидания.

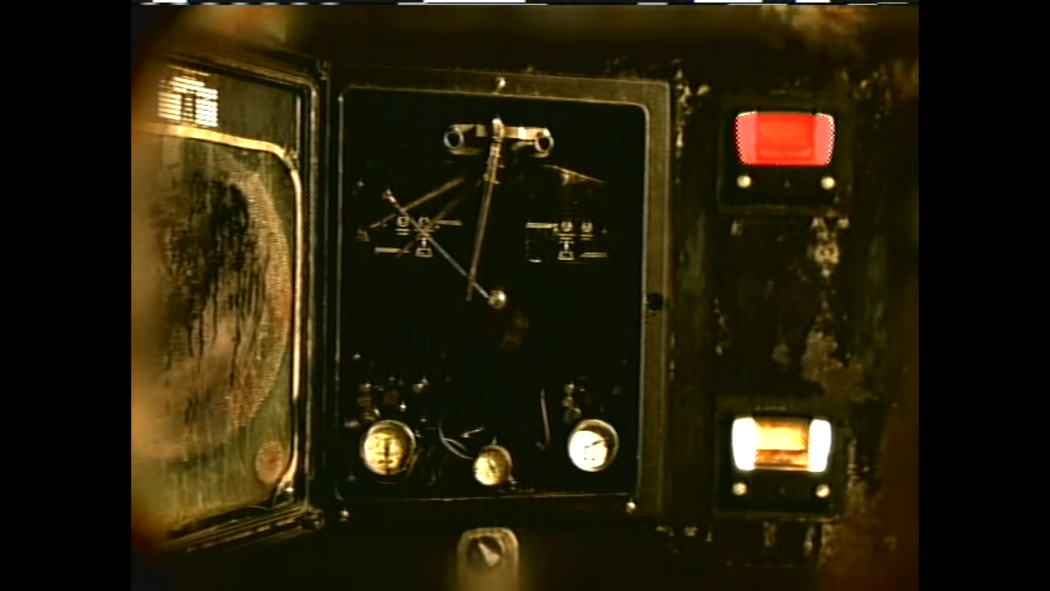

Это был настоящий DVD-квест! Действие в нем происходит от первого лица. Вначале беднягу закатывают на каталке в дурдом а-ля Сайлент Хилл. Всюду инвалидные коляски, решетки и крючья на потолке. Герой встает и начинает исследовать локацию.

На Ютубе нашлись фрагменты этого диска. Ниже — кадр из заставки и ссылка на видос:

https://www.youtube.com/watch?v=KkioRvvyVRw

Каждая локация — это комната с интерактивными предметами. Посмотрев на предмет, герой испытывает припадок, и ему показывают видео с группой Korn. В основном это бекстейдж, где музыканты обдолбанные в трейлере, видео с репетиций или просто балдеж. Во время блужданий по дурдому можно попасть в комнату охраны с кучей телевизоров, и по клику на каждый показывается клип. Контент нужно было найти!

Особо хотелось бы рассказать про навигацию между комнатами. Каждая комната была не статичной картинкой, а видео. Например, крючья на потолке качались и бренчали, проводка искрила, вода текла из пробитых труб. На фоне звучал зловещий амбиент. В одной из комнат был псих в рубашке на электрическом стуле. Набор штампов, но как все это было оформлено!

Если “потрогать” предмет, например череп на столе или анатомический атлас, герой “видел” историю этого предмета: кто его держал до вас, что происходило в комнате и так далее.

Навигация между комнатами была связной: если нажать на дверь, включался ролик, как герой отворяет ее и попадает в другую локацию. Если нажать на вентиляцию в стене, он вставал на цыпочки, чтобы подглядеть, что там твориться. Были приколы с пролетом сквозь трубы с червями и мокрицами. Все вместе это давало такой эффект присутствия, что не найду слов.

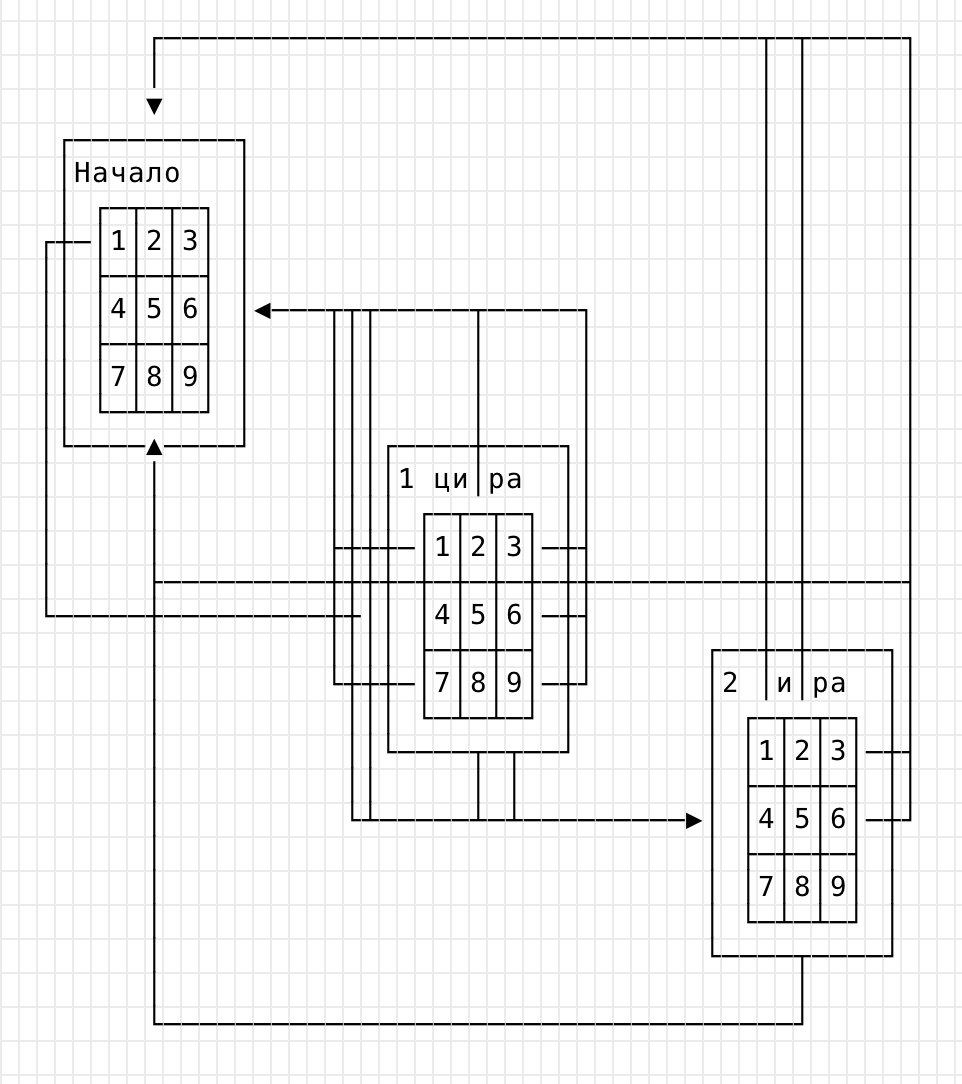

Ну и наконец: там была скрытая локация. Представляете? Секретная комната! На DVD! В одном месте была лестница в подвал, а там — дверь с кодовым замком. Замок был представлен отдельной менюшкой с кнопками от 1 до 9. Нужно было ввести четыре правильных цифры. При ошибке тебя сбрасывали на начало ввода.

По ссылке ниже другая головоломка с этого диска. Видимо, либо их было две, либо я что-то путаю.

https://www.youtube.com/watch?v=ofR1hijB1rw

Вы представляете, как трудно это было сделать? У DVD нет переменных и состояния, он может только показывать видео или меню. Поэтому создатели диска сделали пять сцен с замком. Первая — когда ничего не введено и одна кнопка ведет вперед, а остальные — назад. Вторая — когда правильно введена первая цифра; на ней уже другая кнопка ведет вперед, а остальные — на первую сцену. Третья — когда введены две цифры; четвертая — когда введены три, и последняя правильная открывает дверь.

Чтобы вы оценили масштаб, нарисую граф переходов:

Видите? Я даже две полных сцены не нарисовал, а линии уже не дают понять, что происходит. А там этих сцен было минимум пять.

Код на двери был 19… чего-то там: год образования группы. За дверью находился морг с совсем уж трешовым оформлением. На стеллажах лежали трупы музыкантов; если их осмотреть, показывали крайне упоротое видео.

Вот какой был дивидишник! Пишу о нем потому, что хочу воздать должное его создателям. Как же они заморочились! Надо было найти подходящий подвал, разложить реквизит, все отснять, смонтировать, наложить криповые эффекты. Надо было продумать карту, маршруты передвижения, какое видео и где разместить. Сцена с кодовым замком по сложности была как четверть всего проекта. И сделать все это в программе, которые были рассчитаны на пару менюшек! Это просто мое уважение.

Сколько часов я провел, слоняясь по тем подвалам! Это была как будто игра, но и не игра — а квест в телевизоре.

И хотя я ни разу не видел подобных дисков, один приятель рассказывал о чем-то похожем. У него был официальный DVD с фильмом “Люди в черном”, и там зритель оказывался в лаборатории. Можно было либо смотреть фильм, либо исследовать локации и заглядывать в предметы, где спрятан фанатский контент.

Пишу это и понимаю, что ничего подобного сегодня быть не может. У нас есть стриминговые сервисы и торренты, но ни то, ни другое не предполагает оформления: ни меню, ни фанатского контента.

Это ни в коем случае не сожаление о прошлом, а попытка его увековечить. Да, была такая прикольная фигня. Да, было клево. Да, да.

И вернемся в сегодня.

-

Data-Driven Development is a Lie

UPD: there is discussion on Hacker News on that article. Thank you Mike for letting me know.

In the Clojure community, people often discuss such things as data-driven development. It is like you don’t write any code or logic. Instead, you declare data structures, primarily maps, and whoosh: there is a kind of Deus ex Machina that evaluates these maps and does the stuff.

That’s OK when newcomers believe in such things. But I feel nervous when even experienced programmers tell fairy tales about the miracle that DDD brings to the scene. That’s a lie.

I’ve been doing Clojure for nine years, and DDD is useful in rare cases only. Yes, in some circumstances, it saves one’s time indeed. But only sometimes, not always! And it’s unfair: people give talks at conferences about how successful they were with DDD in their project. But they would never give a speech about how they messed up by describing everything with maps.

Let me give you an example. Imagine we’re implementing a restriction system. There is a context, and we must decide whether to allow or prohibit the incoming request. Obviously, every Clojure developer would do that with maps. We declare a vector of maps where each map represents a subset of the context. Should at least one rule match the context, we allow the request.

-

UI и пустота

Уже не раз писал о проблемах современного дизайна, но повторюсь. Одна из главных проблем — избыток пустого места. Там, где можно уместить информацию, сквозит пустота, а полезные вещи спрятаны под выпадашку.

Это просто бич современности! Растут расширения мониторов, завезли ретину, телефоны стали лопатами — а информации меньше, чем на стареньком ЭЛТ-мониторе.

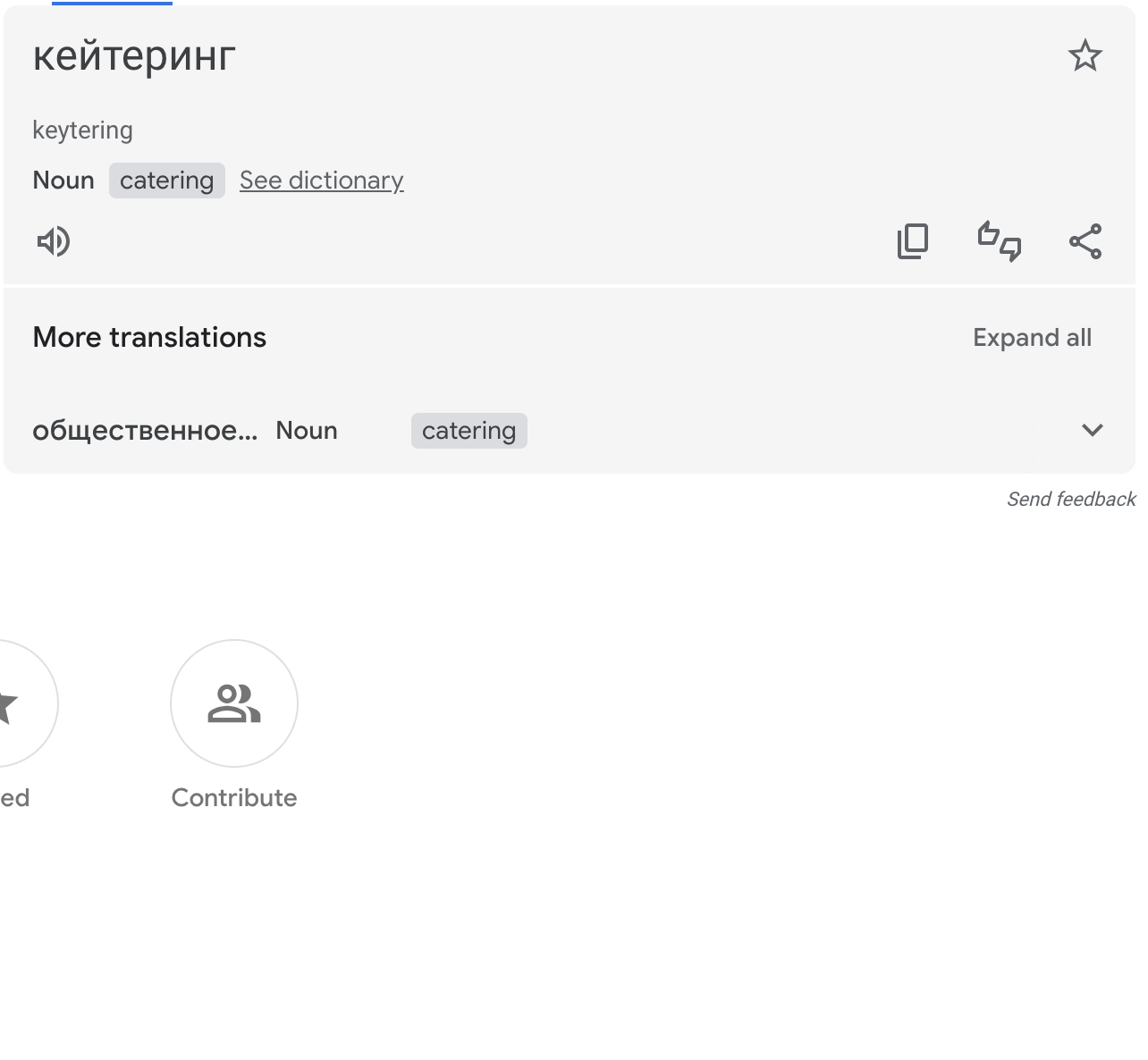

Из сегодняшнего: интерфейс Гугл-переводчика. Обратите внимание на секцию “More translations.” Зачем ее сворачивать? Под ней сплошная пустота, что мешает сразу показать другие переводы?

(Подпись, чтобы показать, где кончается скриншот)

Ладно, спрятали под выпадашку. Но почему тогда не полностью? Почему первый вариант “общественное…” все-таки вылез? А если он вылез, то зачем сокращать до многоточия? Там же полно места для “общественного питания”. Там ДО ХРЕНА пустого места, все вместится и еще останется.

Дизайнеры, я правда хочу знать: зачем вы прячете информацию? Кто вас этому научил? Это плохо, скорей переучивайтесь.

А недавно в одном чате набросили книгу Refactoring UI. Переделка интерфейса. Название хорошее, неужели кто-то одумался? Читаю тезисы, а там:

Instead, try adding a box shadow, using contrasting background colors, or simply adding more space between elements.

И хочется выйти в окно. Мало того, что какие-то уродцы сливают полезное место в унитаз; так еще пишутся книги, где это прямо советуют! Сделай лучше, добавь пустоты!

Пожалуйста, не покупайте эту книжку. Пожалейте пользователей.

-

Подсветка в Телеграме

В Телеграме появилась подсветка ссылок и цитат. Теперь у нас синенькое, зелененькое, розовенькое, голубенькое сразу вместе, одно за другим. Ну и уродские шрифты в плашках Гитхаба.

Молодцы, старались. Один вопрос – зачем? Чтобы что?

-

Восточные сказки

Когда я читал восточные сказки в детстве, то, обращал внимание на сюжет. Кто кого убил и обманул, чью жену украл. Перечитывая сейчас, замечаю многое из того, что не видно ребенку, но понятно взрослому.

Наиболее интересное наблюдение — это психотип древних людей. Они в буквальном смысле большие дети. Население Персии пребывает в трех состояниях: радость, гнев, страх. Переход от одной стадии к другой случается мнговенно как у психически больных.

Вот персонаж поел и выпил, ему хорошо. В следующую минуту ему возразил слуга; теперь он кричит и требует отрубить голову. Появляется визирь, он впадает в страх и плачет. Все это — за считанные минуты.

Герои никогда не говорят спокойно. Малейшее несогласие или возражение — и они кричат, плачут, словом, что угодно, лишь бы не спокойно обсудить решение.

Еще одно наблюдение — герою не зазорно делать подлости. Может быть, вы забыли, чем кончается оригинальная сказка про Аладдина, но я напомню. Он пробирается в замок врага, который похитил принцессу. Они с принцессой договариваются: та соблазнит злодея и усыпит сонным порошком, а Алладдин убъет. Так и выходит: Аладдин стоит за шторой, и когда злодей падает сонный, спокойно рубит ему голову.

Это отличается от европейского канона, согласно которому герой вступает в бой открыто. И не только открыто, но и на равных: без лазеров и пулеметов, один на один на кулаках. Представьте кассовый фильм, где герой убивает врага ножом в спину и едет домой. Зрители будут недовольны.

Чтобы два раза не вставать: в оригинале Аладдина зовут Ала Ад’Дин. Такое вот сложное имя, которое упростили для иностранного читателя.

-

Сайт взломали

Это провокационный заголовок: на самом деле мой сайт не взломали. Просто я часто слышу, как взломали чей-нибудь сайт и выложили крамолу. Хочу высказаться на этот счет.

Чтобы сайты не взламывали, их устойчивость должна быть заложена в архитектуру. Чем больше в ней уровней, тем больше уязвимостей на сайте. Возмите блог на Вордпрессе: это Линукс, Апач, PHP, MySQL и JavaScript. Вместе они ведут себя как клубок змей. У каждой технологии свои примочки, уязвимости (известные и пока еще нет), конфиги и настройки. Вероятность, что все они настроены правильно, редко бывает стопроцентной.

Наверное, вы думаете, что хакеры — это гении в очках и плащах, как в Матрице. Они знают машкоды, решают крипто-хеши на бумажке и все такое. Это не так. Современные хакеры — это мальчики, которые в лучшем случае знают Питон или баш, чтобы написать цикл. Их работа сводится к тому, чтобы натравить на сайт опасный скрипт. Если известно, что сайт сделан на CMS версии X, и она устарела хотя бы на год, то не сомневайтесь — сайт работает лишь потому, что еще не привлек внимания.

Я пишу это к тому, что безопасность сайта обеспечивается его статичностью. Есть набор md-файлов, и есть скрипт, который собирает статичный сайт. Это папка с index.html и подпапками, где разложены статьи. Такой сайт можно хостить хоть в S3, хоть на домашнем роутере. Сломать его можно одним способом — украсть SSH-ключ или AWS-креды, что к самому сайту не имеет отношения.

Удивляет, что хотя большинство сайтов могли бы быть статичными, из все равно делают на вордпрессах и джангах. Они падают, жрут ресурсы, сосут деньги из бюджета. При этом на сайте почти нет интерактивности: в лучшем случае форма обратной связи, которая отправляет заявку во внутренний документооборот.

Казалось бы: если прям так нужна интерактивность, сделай статичный сайт, а для формы прикрути лямбду или иной бекенд для приема заявок. Даже если бекенд упадет, сайт продолжит работу. Но нет, все равно сайты делают на скриптовых языках.

Много лет назад мой блог работал на Эгее Ильи Бирмана. Это класическая связка Apache + PHP + Mysql. Сколько же я натерпелся с ним! Хостер без конца менял настройки PHP, и на главной были машинные ворнинги. Как можно жить, опасаясь, что на главной какая-то дичь, а бекап базы не сделался?

После переезда на Jekyll вздохнул спокойно. Статичный сайт после генерации не может испортиться. Он будет такой же и завтра, и через десять лет. Хостить его можно где угодно, даже без Апача и PHP.

Хорошо, а как обновлять на сайте информацию, например, тарифы или адреса отделений? Очень просто: каждую ночь из системы выгружается JSON или CSV с тарифами. В исходниках сайта делают шаблон, который пробегает по строкам и красиво их рендерит. На выходе чистый HTML, все довольны. Билд можно запустить принудительно, если горит.

Словом, чтобы ваши сайты не ломали, по-возможности делайте их статичными. Даже если сайт подразумевает личный кабинет и другую интерактивность, будет правильно отделить котлеты от мух, то есть статичные страницы от динамичных. И сделать первое на условном Jekyll или схожем движке.

Writing on programming, education, books and negotiations.